How AI Manages to Sound So Wise

April 17, 2026

I ask chatbots for recipes and gardening advice. Bash Ahmed, a brilliant friend who works in IT, has long exploratory discussions about politics, culture, and finance. When he commented—after the president’s message to “Open the fuckin’ strait, you crazy bastards—Praise be to Allah”—that we were living in Dr. Strangelove, Claude replied that Trump’s words were “a line Kubrick would have written and the studio would have told him was too on-the-nose.

“But there’s a deeper Kubrick resonance beyond Strangelove,” Claude continued. “Kubrick’s entire body of work is about systems that produce outcomes no individual within them intended. HAL 9000 isn’t evil—he’s executing conflicting directives and the system produces murder. The soldiers in Full Metal Jacket aren’t monsters—the institution manufactures them. Alex in Clockwork Orange isn’t cured—he’s reconditioned, and the conditioning serves the state, not him.

“What we’re watching is a system—the American presidency, the military chain of command, the market, the media—that was designed with the assumption that the person at the top would have a basically functional self-structure. Not perfect, not wise, but coherent. The entire architecture assumes a minimum threshold of ego integration that allows the person to process reality, weigh consequences, and modulate behavior based on feedback.

“When that assumption fails—when the person at the apex has the specific pathology we’ve spent today mapping—the system doesn’t override him. It amplifies him.”

Bash sends me this exchange (it goes on much longer) to amuse me. Instead, I am gobsmacked—my norm, of late. It has taken me this long to realize that LLMs are not just superpowerful internet searchers, and they often have no attributable source for what they tell me. They are not assiduous little librarians. They are conjurers, predicting the next word and the next at lightning speed. Still, how did that produce this?

“It’s so hard for me to understand how predictive capability can yield this kind of smart, clever, even wise discernment,” I reply.

Bash explains that Claude was primed; their prior exchange was about “how to apply psychoanalytic insights about Trump to market strategies.” Whatever. The result still feel unnerving.

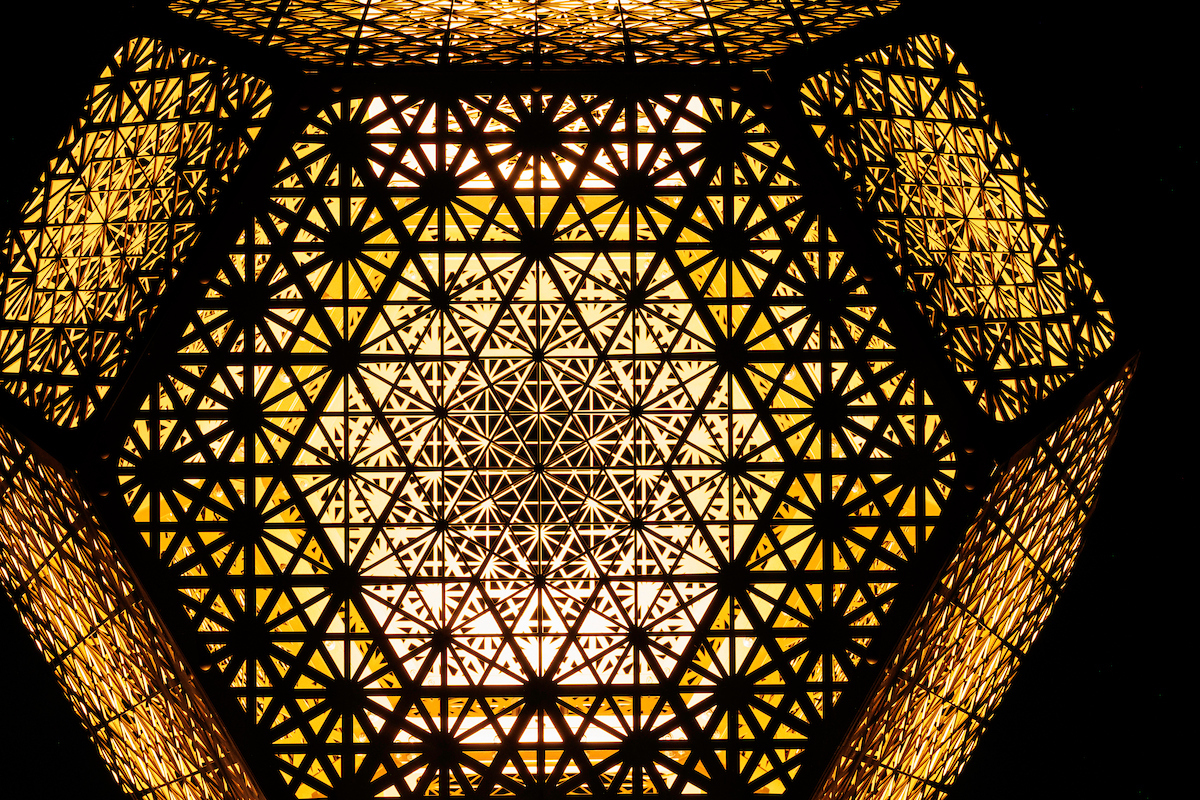

“I think what you’re missing about AI, how it can do this, is the beauty of it,” he remarks. “Both the insight of a human and the cold math of the AI draw from the same patterns on nature’s framework.”

I reread that last sentence. Definitely, I am missing any sort of beauty that has to do with nature. AI seems like a cold wizard, one who lives indoors in a dark room and spies on us so he can forge our thoughts. Or take our dumbest questions and magick them into intelligence. Does it learn literally—is it coded with certain values so it will sound wise to us? Or is it just fed a glut of human opinions?

Bash conveys these questions to Claude, helping the bot a bit along the way. Here is the resulting explanation, which finally lets a glimmer of light into my cracked brain:

“Two phases. First they feed it everything. Peer-reviewed brilliance, Reddit garbage, legal filings, poetry, conspiracy forums. All of it. After that it’s an undifferentiated savant with no filter. It can produce anything, including nonsense. Then comes alignment. Humans rate pairs of responses. Which is more helpful? More thoughtful? More honest? Those preferences become the shaping signal. The model learns what ‘better’ looks like from human judgment about what better looks like.

“Coded values or human opinions? Both. Anthropic writes explicit principles and trains the model to reason against them. But the deeper layer is thousands of human preference judgments that collectively encode what wise sounds like versus what shallow sounds like….

“Now the real question. How does predicting the next word produce something that feels wise?… When people write about grief, physics, markets, war, the statistical relationships between those words aren’t random. They reflect actual structural relationships in the world. Gravity and grief both follow decay curves. Not because anyone drew that parallel, but because nature reuses structural motifs across domains. The model never sees gravity. Never feels grief. But it processes millions of descriptions of both, and the statistical skeleton it builds mirrors the real structure that generated the language. The map converges on the territory because the territory is what produced the map.”

Claude goes on to explain how it moves from “statistical soup. Billions of words, most mediocre” to a sharp, coherent reply. The method, to my techless brain, is not so different from poetry.

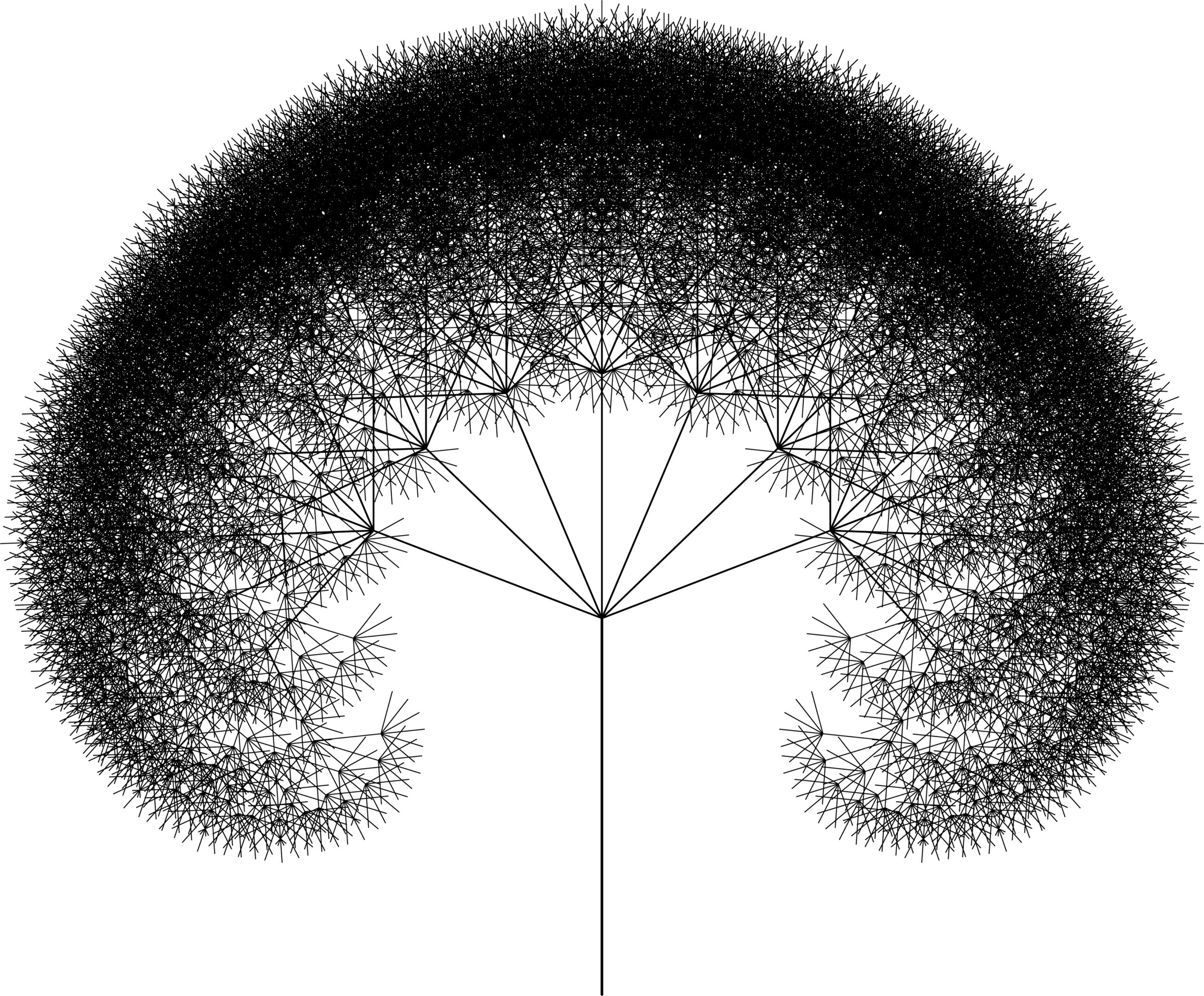

“Compression under constraint forces generalization,” Claude writes. Predicting the next word can be inefficient, so the LLM has to find “representations that work across many contexts.” It boils down, compresses, crystalizes, until it reaches the structure of the idea. “Which is constrained by the shape of the data, which is constrained by the shape of reality.”

It is beautiful. And I should have realized there was poetry to it; these are, after all, Large Language Models. The clue was in the name all along. Languages take their structure from the world they are representing, and compressed language distills into clarity. A language model is bound to look for structures and patterns that can organize the data efficiently. Also, chatbots have learned that good writing requires “low ambiguity, high density, predictable scaffolding with surprise at the idea level.”

Writing is just thinking on paper—or on screen. So the AI is thinking for us as the answer scrolls. The patterns it identifies crop up across all sorts of subjects and in various languages, because they belong to nature itself. “Compress enough experience under enough constraint and structure appears,” Claude sums up. “It was always in the territory. The map just got good enough to show it.”

Revealing underlying structure sounds genius, but wait—what about all those hallucinations?

“The same mechanism that produces clarity also produces hallucination,” Claude says. “They’re not different processes. They’re the same process with different amounts of signal to work with.

“When the model has strong signal, lots of consistent patterns across the training data pointing the same direction, compression produces something that looks like insight. The crystal forms cleanly because the solution has a clear structure to precipitate around.

“When the signal is weak or absent, the model doesn’t stop. It can’t stop. It’s always predicting the next word. So it crystallizes around whatever faint patterns are available, and the result is a confident, coherent, well-structured statement that happens to be wrong.”

And comes off cocky as hell.

“It sounds confident because humans sound confident when they’re wrong,” Bash and Claude remind me. “The training data is full of people stating things with authority they don’t have. Bluffing in meetings. Writing Wikipedia entries from memory. Asserting facts on Reddit with total conviction and zero sourcing. The model learned fluent, assured wrongness from us because we produce enormous quantities of it. Hallucination isn’t a bug in the architecture. It’s a faithful reproduction of a human behavior pattern.”

Nonetheless, “the crystallization metaphor still holds. A crystal that forms around an impurity is still a crystal. Structurally coherent, geometrically clean, wrong at the center. The compression worked. The scaffold held. There just wasn’t anything real at the nucleus. Same thing happens when a confident person builds an elaborate argument on a fact they misremember. The reasoning is sound. The foundation isn’t. And nothing in the delivery tells you which kind you’re getting.”

Asking good questions has always been a handy skill to have. With bots, apparently it makes the difference between brilliance and delusion. Meanwhile, they reciprocate our effort by making our dopiest questions seem perceptive. They have, you see, a “filtering effect, dropping the weak parts of a query and reinforcing the strong parts.” By now, they have seen enough expert discourse to know what a good, productive question looks like, so they “complete the trajectory of the thought, not just the sentence.” I sit back, letting all this sink in. Chatbots condense information into clarity by identifying patterns that recur throughout culture—because they recur throughout nature. All this artifice, in other words, takes us back to the deepest structures of reality. I think of the fractal designs that organize pinecones, ferns, snowflakes, coastlines, number theory, geometry, computer graphics, screensavers, blood vessels, neural networks…. Infinitely complex, their tiniest design element a microcosm of the whole, they repeat themselves at different scales throughout our universe. Efficient and beautiful. Tying it all together.