Big Genomic Promise of Big Data

By Susan Gelman

August 11, 2015

Highlighted by the recent embryonic genetic engineering (CRIPSR/Cas9) controversy, advances in genetics and genomics have been made in leaps and bounds in the past twenty years. It is now relatively easy for us to obtain genetic information about ourselves (23andme DNA kits, for example), and even our pets. Entire genome sequencing is now possible, albeit rather expensive, and it is predicted to become the future of modern medicine in the next five to ten years. There are tremendous advantages to having easily accessible genomic information, the most obvious being the capability to make medicine truly personalized. This would allow medical professionals to diagnose patients genetically at risk for certain diseases via biomarkers, as well as determine what treatments will be the most effective based on genomic data.

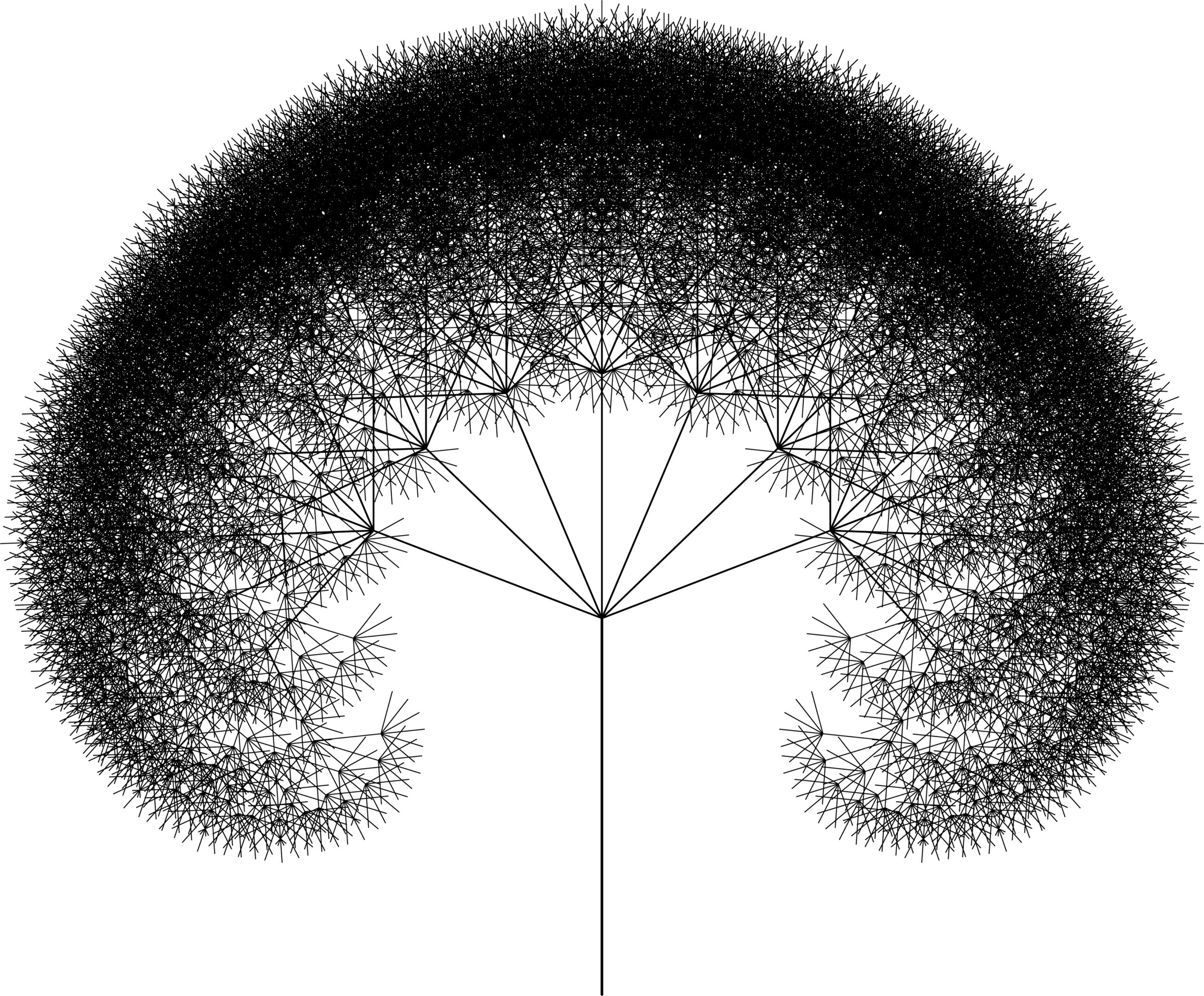

But as with any new technology, there are some serious hurdles to get past before we can all start sequencing ourselves. Data scientists have written fantastic algorithms and software packages to allow genomic data to be interpreted, however this does not allow us to escape the fact that the sheer amount of data generated during sequencing is enormous. And just because we can interpret it does not necessarily mean that we can handle and store it, so as sequencing demands grow, so must our data handling capabilities.

The massive sizes of these genomic data sets qualify them to be classified as Big Data, but what does ‘big data’ actually mean? The buzzword is often thrown around casually, but the actual definition states that big data comes from “multiple sources at an alarming velocity, volume, and variety.” This means data complexity increases from all angles when it becomes big data, making storage especially difficult. Cloud storage is popular for individual users, but entrusting large amounts of important data to a third party can be a security and safety concern.

This is certainly not a new concern; researchers have discussed data storage/processing as an experimental bottleneck for quite some time. However this is poised to become a much larger problem than it currently is, causing worry among biologists and geneticists. A very recent PLoS Biology study estimates that approximately 25 percent of the world’s population will have their genome sequenced by the year 2025, which comes to an estimated 100 million to 2 billion genomes. This alone is a daunting figure, and yet we also have to consider that when sequencing, there is much more data to be stored and analyzed than the single genome itself. This leaves us with a projected 1 zetta-base of data acquired per year, and the need to store 2-40 exabytes per year for genomics data alone.

But fortunately the authors are optimistic in regards to data storage, as new methods of sequencing and analysis will hopefully prevent the need to maintain all raw data in the near future. Similarly, as we progress in our capabilities of generating data, we must also make strides in the ability to compress data into more manageable files. In 2014 the National Institute of Health (NIH) awarded $32 million into the Big Data to Knowledge initiative for computational research, as well as training opportunities. The project aims to work on extricating useful knowledge from big data acquisitions and is intended to expand the accessibility of raw biomedical data. The expectation is that this initiative will help solve the problem exemplified by the PLoS study and will help the ‘omics’ (genomics, proteomics, metabolomics) continue to be further integrated into the health and medical fields.